Will AI Replace My Job?

A view from the front lines of AI development

In the past 48 hours, I’ve heard from nearly 20 people worried AI is about to take their jobs. Citrini Research’s “AI 2028 Global Intelligence Crisis” essay poured gasoline on an already tense moment. It sketches a future where AI wipes out traditional software, upends industries, and displaces huge swaths of workers in an economic death spiral.

Since its release, much of the conversation has been driven by economists and finance voices modeling top-down outcomes. Those perspectives matter. But they often gloss over the mechanics of how displacement would actually unfold.

At Forum AI, we spend our days evaluating AI systems in real-world environments, across complex and high stakes domains. What we’re seeing on the ground is both powerful and more nuanced than the headlines suggest.

No one can predict the future. But we can reason from what we are actually seeing in production AI systems today. Instead of arguing over whether all white collar jobs vanish by 2028, we can get specific about what the path would look like, and how close we really are to those thresholds across various industries.

How will AI ‘replace humans’?

The claim that AI will displace large swaths of white collar workers in the next one to two years is the most jarring part of the essay, and central to its thesis. Yet it is also the least explained.

“AI got better and cheaper. Companies laid off workers, then used the savings to buy more AI capability, which let them lay off more workers. [...] A feedback loop with no natural brake.” —AI 2028 Global Intelligence Crisis

If AI reshapes white collar work, it will not happen all at once. It will unfold in stages. There is strong literature on how technologies diffuse, and we can already see a version of this pattern in AI and software engineering. Both suggest a sequence like this for any given use case in a given job or sector:

Augmentation

At first, AI meaningfully assists a human doing the work they’ve already been doing. It does not replace the workers outright, but it expands their individual output. That increased capacity can translate into fewer hires or “efficiency” layoffs over time.

Example: Software engineers using Claude Code to write and refactor faster, enabling the same output with fewer people and triggering efficiency driven layoffs and slower hiring.Full task ownership

Next, AI fully and independently performs work once handled by a specific person. Displacement becomes more direct at the task level, but humans can still move up a layer to set direction and provide oversight.

Example: An engineering or product manager assigns a coding agent to build and maintain the company’s software stack end to end.Autonomous coordination

Finally, and unlike other new technologies, AI may identify priorities, delegate to other AIs, and oversee execution. Indeed, this is where technology adoption becomes fundamentally different from the past. If AI can truly manage AI, the human layer starts to disappear more completely. This is the idea that Chamath Palihapitiya is getting at in his recent essay, “Making A Machine That Builds Machines.”

Example: An AI engineering or product manager sets a strategy itself, and then assigns a coding agent to build and maintain the software.

What’s stopping AI from ‘replacing humans’ today?

AI has not replaced white collar workers today. In most domains, we are still in the augmentation phase, with a few exceptions like parts of software engineering edging toward full task ownership. If, a la Citrini, replacement is going to take place within two years, what has to happen? Spelling that out matters.

Much of the rapid human replacement narrative leans heavily on benchmark results, especially METR’s “Time Horizons” evaluation, which tracks how long and complex the real world tasks frontier models can complete autonomously. Those results show impressive progress, but there is an important distinction between expanding task completion time on structured problems (mostly related to software engineering) and replacing entire job functions. True displacement requires several other factors.

My strong sense is that the barriers to replacing humans look very different across industries, roles within those industries, and use cases within those roles. To stay practical, though, we can break down a more holistic set of requirements that would need to be true for AI to replace humans in a given domain, say family medicine or software engineering.

Technical capacity: The baseline is straightforward. AI must perform the job as well as, or better than, a human. But jobs are multidimensional. They require a full range of modalities and skills working together. A doctor does not just classify symptoms. They listen, observe, read emotion, reason through tradeoffs, communicate clearly, coordinate with other doctors, and execute healthcare plans. Any serious claim that AI can replace humans in a domain—rather than assisting them with or automating one part of their job—has to assess that full stack in context, not isolated tasks. Such holistic evaluations are exactly what we focus on at Forum AI.

Consistency: I can hit a perfect golf shot now and then, but that doesn’t put me on the PGA Tour. What separates amateurs from professionals is consistency. AI can produce impressive outputs, but replacing humans requires sustained, reliable performance. Today’s systems are non deterministic, meaning the same prompt can produce different results because of the underlying statistical nature of how they operate. Meanwhile, AI’s capacity for long-term memory and continuous learning are still evolving, and both are critical to LLMs being consistent. Consistency should be seen as a central factor to whether AI can truly take over a role in a given domain.

Regulation (in some cases): I’ve worked in both education and healthcare technology, and I’ve seen that the bottleneck is often not the tech itself. In regulated domains like healthcare, education, and government, what matters is not just whether AI can replace humans, but whether we are willing to let it. This is a policy question as much as a technical one, and it is likely to loom larger in the years ahead. For example, recent OMB guidance on AI neutrality is already shaping how federal agencies procure and deploy systems, and has become a major focus of our client work.

Trust: A recent Forbes article argued that as AI matures, trust becomes the new battleground. That tracks with what I have seen in market research and in direct work with labs. But “trust” looks different across different domains. A coding agent needs the confidence of engineering and product leaders. A healthcare agent needs the confidence of clinicians and patients. Those are very different bars to clear.

Trust will likely be a real constraint on human displacement–level adoption, especially in high stakes or less tech-fluent domains. It is not surprising that coding agents are moving the fastest. Their users understand the technology, their work is immediately checkable, and the downside risk is rarely life or death.

It’s important to remember that, at the end of the day, the decision to replace a human needs to come from a human.

When will AI ‘replace humans’?

This is the trillion dollar question. No one knows. But with a clearer framework, we can do better than consigning all white collar jobs to the dustbin by 2028.

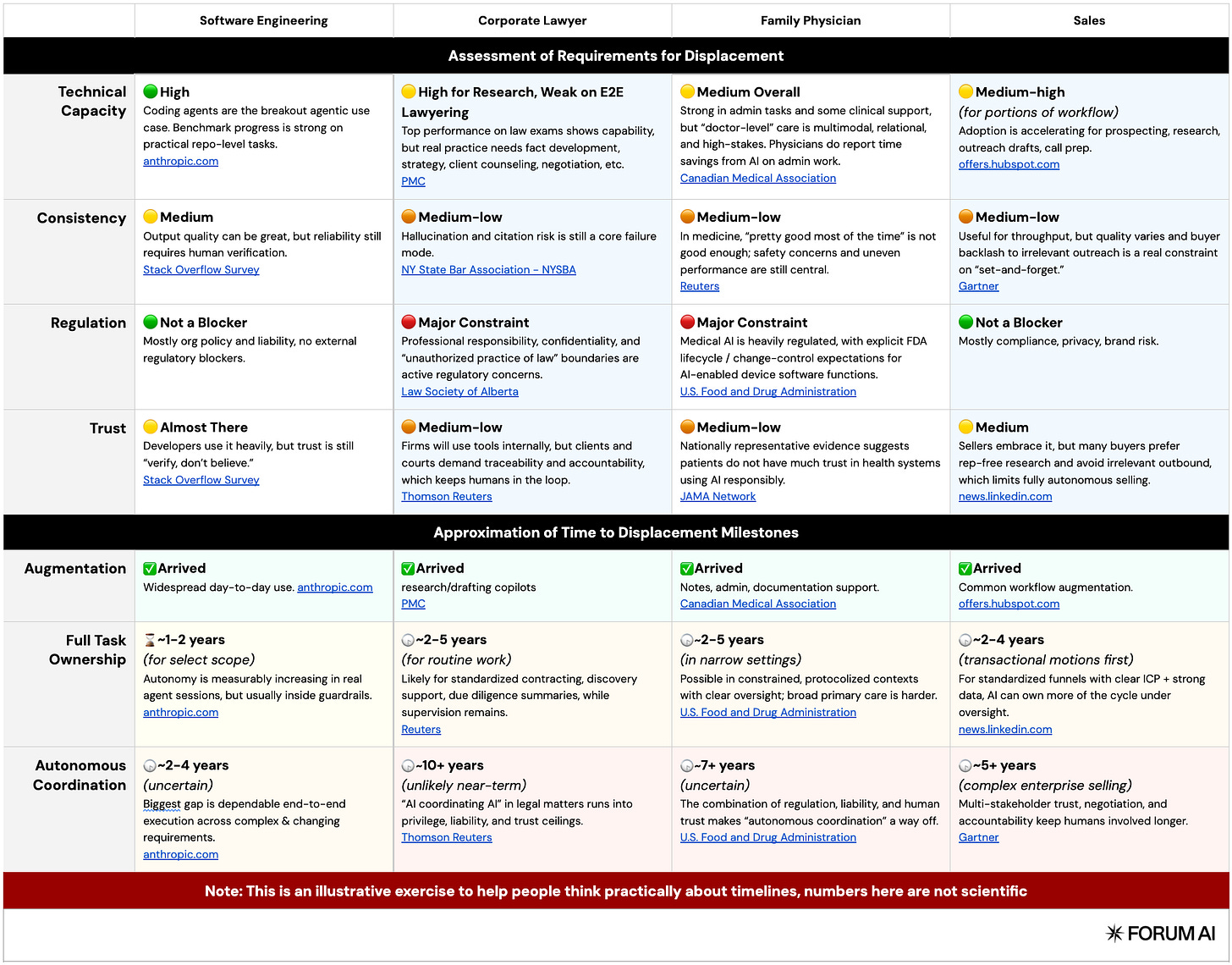

To make this concrete, we’ll walk through several broad domains and assess their trajectory against the 4 requirements outlined above: technical capacity, consistency, regulation, and trust.

To offer a reasonable assessment, I’ll use software engineering as a reference point. It is arguably the domain closest to meaningful automation, with growing consensus that we are nearing the second phase of adoption, full task ownership. I’ll also discuss the comparison sectors as a whole, even though specific fields, jobs, and tasks within them may move toward automation at different rates.

But, to be clear, this is not a scientific forecast. It is a thought-exercise, meant to help people reason productively about something that is highly relevant to their lives.

I’ve included an assessment of four white collar domains as examples, but this framework can be applied more broadly. The goal is to ground the conversation and give us a clearer way to think about AI’s trajectory in the areas that matter most to us.

What does it actually mean for AI to ‘replace humans’? (And are we doomed?)

There is a critical difference between replacing jobs humans do today and replacing humans altogether. Those are not the same conversation.

In each of the three phases outlined above, which roughly track how people talk about AI “replacing humans,” displaced workers may find new ways to create value. Historically, technological shifts have done exactly that.

Still, AI’s potential to autonomously coordinate, the third phase, does raise the stakes. In theory, it could absorb both task level and higher-order white collar work, narrowing obvious paths upward, something previous technological shifts have not done over the long term. Even so, there’s reason to believe humans will continue to find long-term purpose. I offer three below.

The future is harder to predict than we realize.

Imagine trying to explain what a “B2B SaaS Sales Rep” is to a farmer in the 1600s. Sometimes the gravity of change is just so significant that it becomes nearly impossible to imagine what the future will look like. I believe we’re in one of those moments now. But just because we can’t imagine a future where we have a clear purpose, doesn’t mean it won’t exist. Remember, for most of human history 80-90% of us were farmers (who likely could never have understood B2B SaaS).

Of course, none of this means the transition will be smooth. Humans have always found purpose, but often through social and political turbulence. Think of the Luddites, or more recently globalization, the Rust Belt, and the dislocation that followed. Even if every job is not gone by 2028, that does not mean we avoid upheaval or painful economic shifts. But there’s probably a light at the end of the tunnel.

“Nothing is ever as good as it first seems and nothing is ever as bad as it first seems.”

This quote sits atop Rodney Brooks’ latest ‘tech prediction scorecard’, and it feels fitting. To be clear, I’m AI pilled. I think AI will drive enormous change, with real economic consequences, including job losses. But I also think reality will be more nuanced. If you look at Rodney’s site, where he revisits bold predictions over the years you’ll notice a pattern. Spoiler: bold predictions tend to be wrong.

Another great source is Pessimists Archive. It is essentially a running catalog of past technological panics, from bicycles to televisions to ATMs, each one met with confident predictions of social collapse. The pattern is not that technology has no impact. It is that our forecasts tend to overshoot in the short term and underestimate how adaptation actually unfolds.

There is something inherently valuable that humans offer.

A study from the CFA Institute found that for people with limited investing experience, the primary source of financial advice is still family, more than Google, YouTube, or ChatGPT. It is unlikely that parental advice is objectively superior on average. What it suggests instead is that when decisions matter, people gravitate toward other humans.

That instinct is not about raw information. It is about accountability, empathy, and shared experience. When the stakes feel real, I believe people want to engage with someone who understands what it means to live with the consequences. A spreadsheet can optimize a portfolio. An algorithm can surface historical returns. But neither has to sit across the dinner table if things go wrong.

If these patterns hold, displacement will be more complex than a simple capability curve. AI will expand what is possible, compress headcount in many areas, and fully automate some roles. But across many domains, including ones that do not yet exist, the human layer may persist longer than the models alone would suggest.

That does not mean change will be easy. It means it will be human.

Robbie is co-founder of Forum AI, a company that brings trusted human experts into the loop to help large labs and smaller companies build AI that people can trust.